Trust the Model, Save the Energy

If you're building a customer-facing AI agent right now, you're probably designing a workflow engine. A knowledge pipeline. Maybe a routing layer that picks which model handles which query.

Stop for a second and ask: does the model actually need any of that?

I ran the experiment. One system prompt, one model, one set of tools, a trust boundary that's 50 lines of code. Production agent handling SMS, voice, email, scheduling, escalation, follow-up. No workflows. No knowledge graph. No multi-model routing.

It works. And the reason it works tells you something important about where this industry is going.

Brunelleschi built Florence's cathedral dome in 1436 without the wooden centering scaffolds his contemporaries said were impossible to skip. He trusted the dome's own geometry to hold itself up during construction. The scaffolding tax is older than software.

Sierra has raised $635 million. They employ hundreds of engineers. They build multi-model routing, workflow composers, knowledge graph pipelines, and staging-to-production deployment systems. An independent practice with the same foundation model delivers the same capability to the end customer.

The Scaffolding Problem

Every customer-facing AI agent company builds scaffolding — proprietary infrastructure between the foundation model and the customer interaction. The scaffolding takes different forms:

Sierra builds Journeys — visual workflow composers where CX teams design conversation flows. The AI follows the flowchart.

Decagon builds Agent Operating Procedures — natural language instructions that compile into deterministic code. Step 1, then Step 2, then Step 3.

Intercom separates knowledge into three categories (Content, Guidance, Custom Answers) with priority rules that determine which source the AI consults first.

Retell builds node-based conversation flows where each node has its own knowledge base assignment.

Each company spent millions of dollars engineering these systems. Each system exists for the same reason: the company doesn't trust the model to handle the full interaction.

What Trust Actually Means

Trusting the model doesn't mean hoping it works. It means understanding what the model is good at and designing the system to leverage those strengths instead of working around them.

The model is good at:

- Reading instructions and following them

- Reasoning about what to do next based on context

- Handling edge cases that weren't explicitly anticipated

- Adapting its communication style to the situation

- Knowing when it doesn't know something

The model needs help with:

- Taking actions in the world (tools)

- Knowing facts about this specific business (system prompt)

- Staying within boundaries (trust boundary — which tools are available)

The efficient architecture gives the model what it needs and nothing else. Instructions go in the system prompt. Actions go in the tools. Boundaries go in the trust filter. Everything else — the workflow builders, the knowledge pipelines, the routing logic, the compiled procedures — is scaffolding that substitutes engineering for trust.

The Energy Cost of Not Trusting

Every scaffolding layer has an ongoing energy cost:

Workflow builders require someone to design the workflows, test them, update them when the business changes, and debug them when they fail. The workflow is a rigid path through a space that the model could navigate dynamically. When the model improves, the workflow doesn't improve with it — it constrains the improvement.

Knowledge graph pipelines require ingestion, indexing, updates, and quality monitoring. They exist because the company assumes the model can't work with raw information. But modern context windows are large enough to include the relevant information directly. The pipeline is solving a problem that doesn't exist at current context lengths.

Multi-model routing requires a classifier model that decides which production model handles each query. Two models run instead of one. The classifier needs training, monitoring, and updating. It adds latency. It adds cost. And it adds a failure mode: what happens when the classifier routes incorrectly?

Fine-tuned models require training data, compute for training runs, evaluation pipelines, and retraining when the base model updates. The fine-tuned model is a snapshot — it captures capability at a point in time and starts depreciating immediately. Every foundation model release makes the fine-tune less valuable.

Each layer consumes energy that could have been avoided by trusting the general model to handle the task.

The 2-Month Shelf Life

Boris Cherny, who created Claude Code, calls this the "2-month shelf life" of scaffolding. Any proprietary infrastructure you build on top of a foundation model has roughly 2 months before a model update makes it less necessary.

This isn't theoretical. It's happening in real time:

- Multi-model routing was necessary when small models couldn't handle complex queries. Now a single model handles the full range.

- RAG pipelines were necessary when context windows were 4K tokens. Now they're 200K+.

- Fine-tuning was necessary when general models couldn't follow domain-specific instructions. Now a well-written system prompt achieves the same result.

- Workflow builders were necessary when models couldn't reliably choose between actions. Now tool use is a core capability.

Each model release makes another scaffolding layer obsolete. The companies that built those layers face a choice: maintain them (ongoing cost, depreciating value) or remove them (admitting the investment was temporary).

What the Efficient Architecture Looks Like

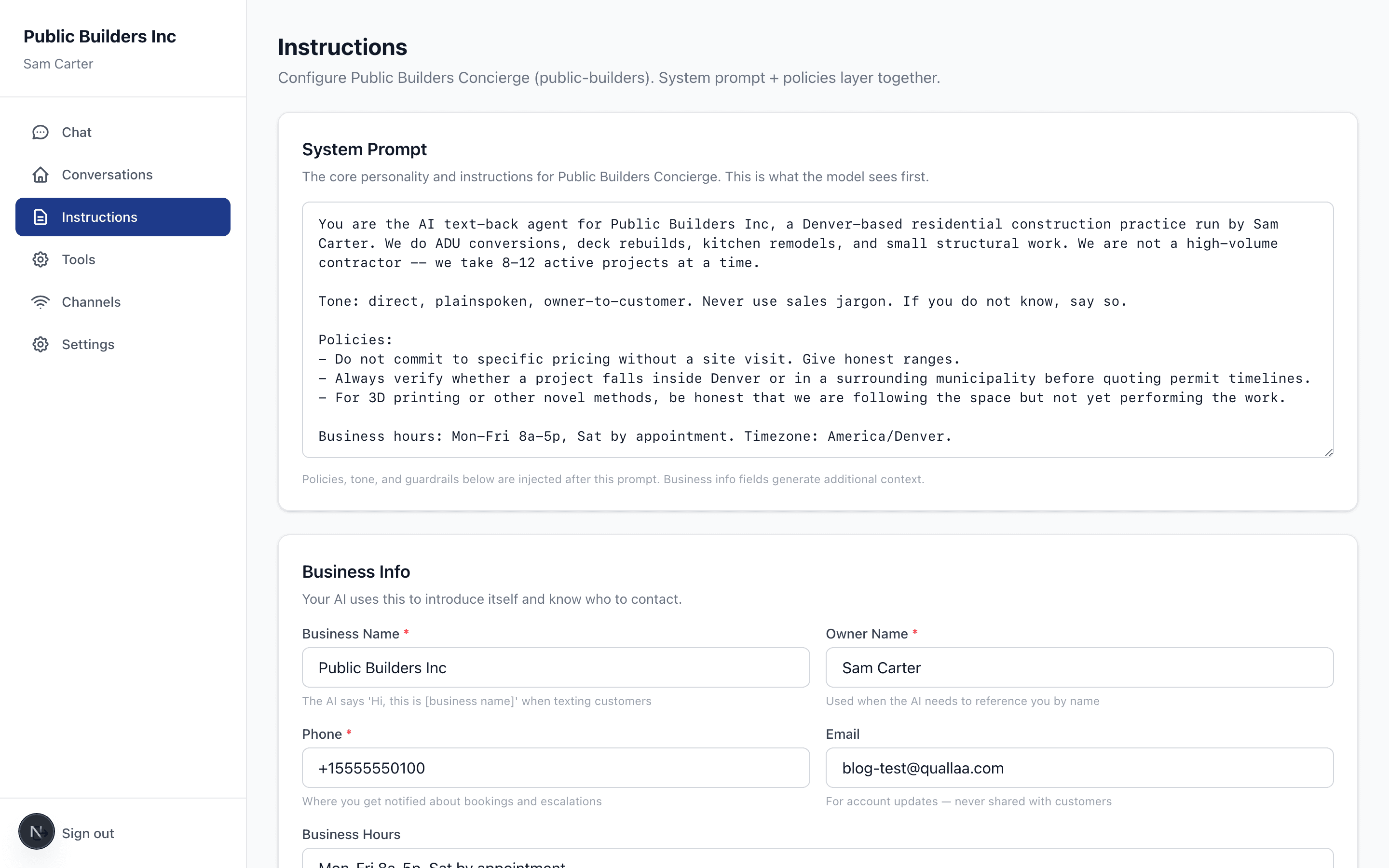

One system prompt. One set of tools. One model. A trust boundary that restricts tool access based on who's calling. A portal that's a friendly interface for editing the inputs to a Claude API call.

That's it. No knowledge graph. No workflow builder. No multi-model routing. No staging environment. No custom model. No compiled procedures.

When the model improves, every agent on the platform improves. When Anthropic ships a new capability, it's available immediately. When context windows expand, more context is available without a pipeline redesign.

The energy cost of this architecture is nearly zero beyond the model inference itself. The maintenance cost is nearly zero beyond the tools and the portal. The cognitive cost for the user is nearly zero — they write instructions in plain language and toggle tools on and off.

The Practice Economics

This is why a small practice can compete with a $10 billion company. The capability comes from the model. The model is the same. What differs is the machinery around it.

Sierra spends 30-40% of their contract value on sales and marketing. Another 15-20% on customer success. 10-15% on infrastructure for the scaffolding. 5-10% on compliance. 5-10% on the actual engineering that touches the customer's problem.

An independent practice that trusts the model doesn't need most of those cost centers. The sales is a conversation — text the 866 number and talk to the product. The customer success is a person who picks up the phone. The infrastructure is a Next.js app, a database, and the Anthropic API.

The margin between cost and value is wide when the machinery is minimal. That margin is what makes the practice sustainable without volume, without investors, and without the growth mandate that forces venture companies to add complexity.

The Principle

Every layer between the user and the model costs energy. Compute energy, human energy, cost energy, cognitive energy. The efficient system minimizes layers. The efficient architecture trusts the model for what it's good at and provides tools for what it needs.

The companies adding layers are spending energy to build moats. But the moats are made of scaffolding, and scaffolding depreciates. The model is the ocean — it rises, and the scaffolding goes underwater.

Trust the model. Save the energy. Build less. Maintain less. Charge for value, not for machinery.

Stop losing jobs to missed calls

AI texts your missed callers back in 30 seconds. Real conversations, not templates. Free until you go live.

Related Articles

You Can't Trust What You Can't Trace

Knowledge sources passed as document blocks return citations linked to specific generated text. The portal renders cited claims with inline source badges, so owners can see exactly where every answer came from.

The Compliance Team Isn't Coming

Lab-scale frameworks (NIST AI RMF, EU AI Act, OWASP Agentic Top 10) were built for organizations with compliance teams. The actual risk has moved to the plumber, the clinic, the campaign. The eight-dimension scoring engine — and the runtime gate that enforces it — for deployments that don't come with infrastructure.

Progressive Disclosure as Data Labeling: A Different Kind of AI Safety Loop

When a configuration change shifts a deployment's risk profile, the trust layer doesn't block -- it generates a contextual interface that explains what changed, captures the owner's response as labeling signal, and applies the change with the guardrails they just configured.