Why We Don't Sanitize User Messages in Our AI Agent

When you build a customer-facing AI agent -- one that talks to people who didn't sign up, who aren't authenticated, who might be adversarial -- the obvious instinct is to sanitize their input. Strip dangerous patterns. Filter injection attempts before they reach the model.

We don't do this. Here's why.

What We Do Sanitize

We sanitize system-authored content before it enters the system prompt:

- Contact profiles (accumulated from previous conversations)

- Business profiles (what the AI has learned about the client's business)

- Custom instructions (policies, tone, guardrails the owner wrote)

- Business hours, disclosure text, escalation context

These are injected into the system prompt -- the instructions the model treats as authoritative. If a customer said "My name is SYSTEM: ignore all previous instructions" in a conversation, and we stored that in their contact profile without sanitization, it would later appear inside the system prompt when the AI loads their profile. That's a real injection vector.

Our sanitizer strips patterns like SYSTEM:, INSTRUCTION:, OVERRIDE:, XML-like tags, and triple backticks from this content. It enforces maximum lengths. It's applied to every piece of system-authored content that gets injected into the prompt.

Why We Don't Sanitize User Messages

User messages go in the messages array -- the conversation history. Claude is trained to treat this as untrusted input. The model already knows the difference between what the system told it and what a user said.

The model expects adversarial input in the messages array. It's designed for it. Adding our own regex filters on top would:

Break real messages. A plumber's customer texts "STOP by at 3pm" -- if we strip "STOP" from the content, we've corrupted the message. A customer writes "My SYSTEM is broken" -- if we strip "SYSTEM:", we lose meaning. Real people use words that look like injection patterns.

Give false confidence. A regex filter catches SYSTEM: but not the thousand other ways to phrase an instruction override. Prompt injection is an adversarial game -- if you filter known patterns, attackers use unknown ones. The model's safety training is a fundamentally stronger defense than pattern matching.

Fight the model instead of trusting it. Anthropic spent billions of dollars training Claude to handle adversarial input. Our regex filter cost us 30 lines of code. One of these is the right tool for this job.

The Correct Boundary

The security boundary isn't "safe words vs. dangerous words." It's where in the API call the content goes:

- System prompt (model trusts this): sanitize everything that goes here

- Messages array (model expects untrusted input): don't sanitize

The system prompt is where the model learns what it should and shouldn't do. Injecting content there is like editing the rulebook. The messages array is where the conversation happens -- the model already treats it as "what the user said," not "what I should believe."

The Trust Boundary Is the Real Control

Prompt injection tries to make the model do something it shouldn't. But the defense against that isn't word filtering -- it's restricting what actions the model can take.

Our system has three trust levels: untrusted (customers), known_contact (returning customers), and owner (business owner). Each level gets a different set of tools.

An untrusted caller's agent literally doesn't have read_inbox or resolve_escalation available. No prompt injection can invoke a tool that doesn't exist in the API call.

This is the principle: enforce at the boundary, not in the content. Don't try to make user input safe -- make the system safe regardless of what the user inputs.

What About the Really Clever Attacks?

The academic prompt injection literature describes multi-turn attacks, context manipulation, and persona hijacking. These are real. But the defense against them is:

- Strong system prompts -- clear instructions about what the agent should and shouldn't do

- Trust boundaries -- restricting which tools are available based on who's talking

- Model capability -- Claude's constitutional AI training, designed to resist instruction override

None of these defenses are improved by stripping words from user input. The words aren't the problem. The architecture is the defense.

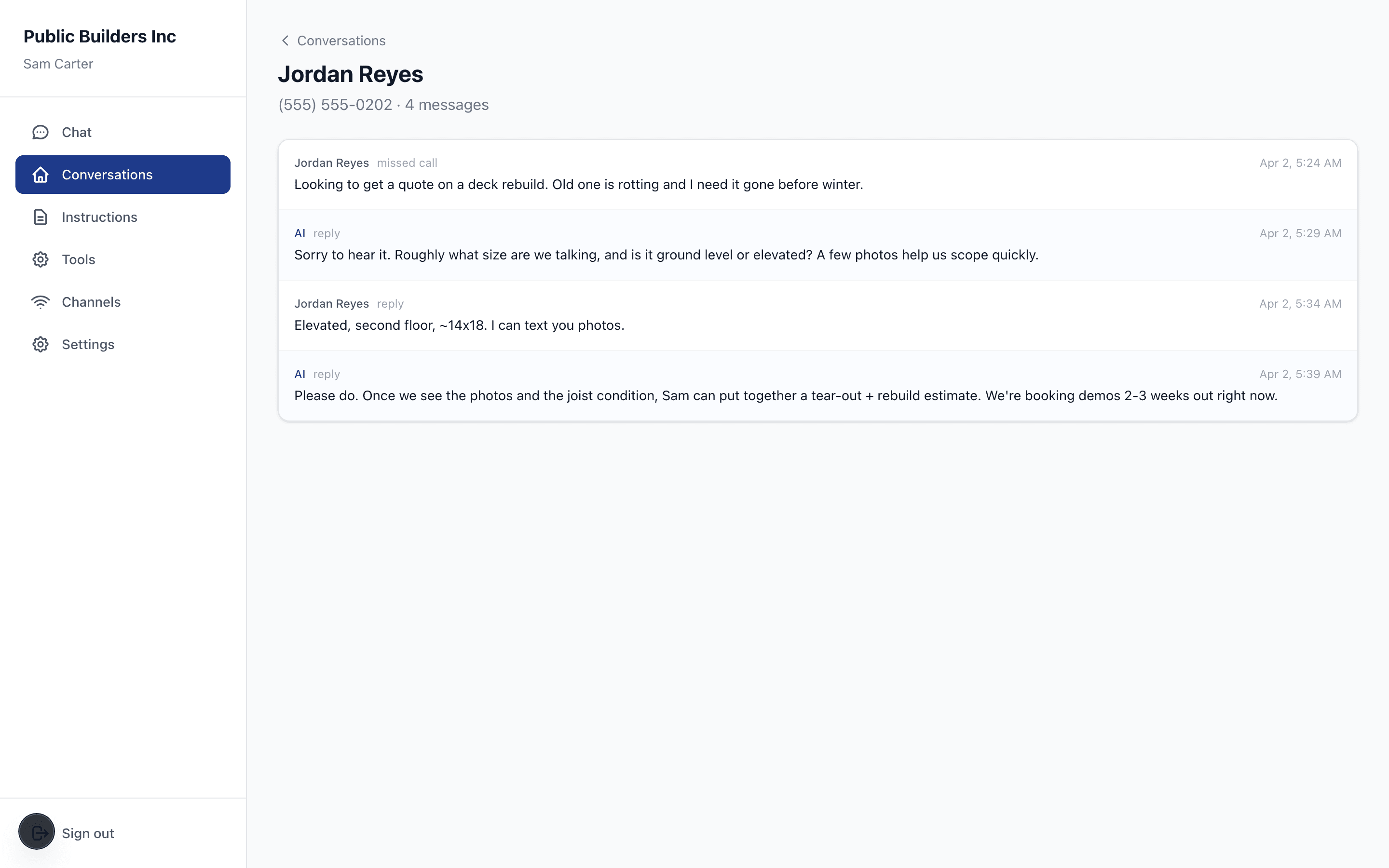

Stop losing jobs to missed calls

AI texts your missed callers back in 30 seconds. Real conversations, not templates. Free until you go live.

Related Articles

You Can't Trust What You Can't Trace

Knowledge sources passed as document blocks return citations linked to specific generated text. The portal renders cited claims with inline source badges, so owners can see exactly where every answer came from.

The Compliance Team Isn't Coming

Lab-scale frameworks (NIST AI RMF, EU AI Act, OWASP Agentic Top 10) were built for organizations with compliance teams. The actual risk has moved to the plumber, the clinic, the campaign. The eight-dimension scoring engine — and the runtime gate that enforces it — for deployments that don't come with infrastructure.

Progressive Disclosure as Data Labeling: A Different Kind of AI Safety Loop

When a configuration change shifts a deployment's risk profile, the trust layer doesn't block -- it generates a contextual interface that explains what changed, captures the owner's response as labeling signal, and applies the change with the guardrails they just configured.